Memristor Synapses for Neuromorphic Computing

Neuromorphic computing, which imitates the principle behind biological synapses with a high degree of parallelism, has recently emerged as a promising candidate for novel and sustainable computing technologies. The first step toward realizing a massively parallel neuromorphic system is to develop an artificial synapse capable of emulating synapse functionality, such as analog modulation, with ultralow power consumption and robust controllability. We begin this chapter with a simple description of neuromorphic systems and memristor synapses. Further, we introduce and evaluate the state-of-the-art neuromorphic hardware technology in terms of novel functional materials and device architectures toward the implemen-tation of fully neuromorphic computers, which have been extensively explored in recent years. Finally, we briefly describe artificial neural networks based on mem-ristor synapse in forms of crossbar arrays.

Modern computers and electronics, such as smartphones and supercomputers, have been developed in accordance with Moore’s law, which implies improvement in cost, speed, and power consumption by scaling down devices. However, the funda-mental physical limits and increased fabrication costs pose a hindrance to sustainable development of computing technology. Moreover, with the advent of the big data era, unstructured data and data complexity explosively increases, imposing constraints on the conventional computing technology owing to the von Neumann bottleneck.

Neuromorphic systems, which mimic the nervous system in the brain, have recently become known as strong candidates to overcome these technical and economic limitations owing to their proficiency in cognitive and data-intensive tasks, together with their low power consumption. To successfully implement these neuromorphic systems, it is of utmost importance to research and develop artificial synapses capable of synapse functions, high reliability, low energy consumption, etc. In the plethora of possible devices, memristors have gained the spotlight because of their desirable characteristics as artificial synapses, including device speed, footprint, low energy consumption, and analog switching.

Neuromorphic systems

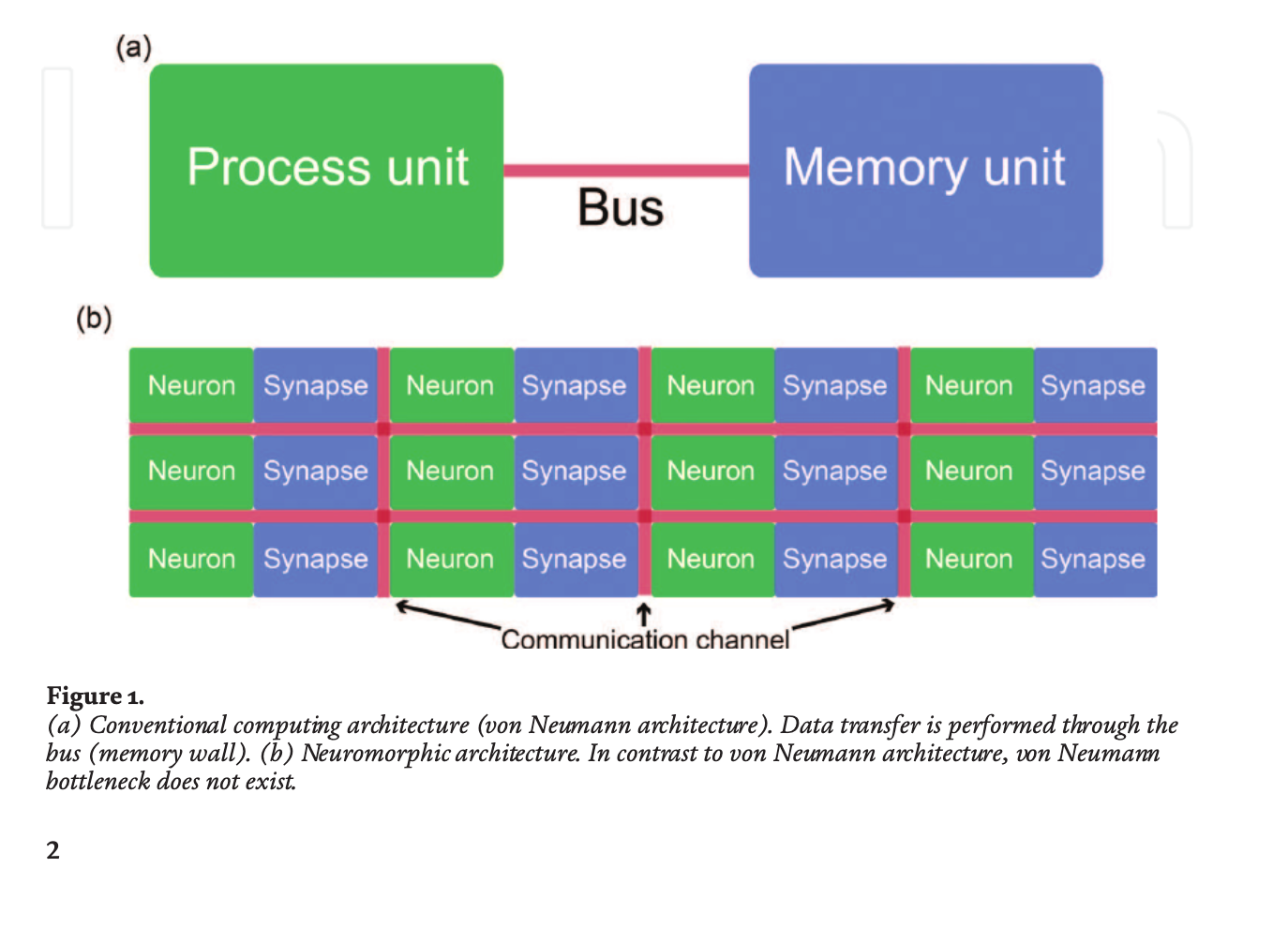

Conventional computing architecture, that is, von Neumann architecture, forms the groundwork for modern computing technologies. Despite tremendous growth in computing performance, classical architecture currently suffers from the von Neumann bottleneck, which results from data movements between the processor and the memory unit. The memory wall issue, causing high power consumption and low speed, hinders the continuous development of computing technologies.

Moreover, artificial neural network (ANN) algorithms, such as deep learning, deal with image classification, sound recognition, specific complex tasks (e.g., the AlphaGo) and so on. Although the ANN algorithms have exhibited superior performance over the conventional computing technologies, they are, at present, constructed on the von Neumann architecture; hence, considerable time and energy resources are required for their operation. Neuromorphic architecture, a bio-inspired computing architecture, is one of the most promising candidates to resolve these problems. The neuromorphic systems take advantage of the cerebral nervous system, which consists of a massive parallel connectivity between the neurons (i.e., processor) and the synapses (i.e., memory), indicating the absence of the von Neumann bottleneck.

The von Neumann architecture shows that the processor and memory are separate, leading to the von Neumann bottleneck. In contrast, in the case of neuromorphic architecture, the neurons and synapses are combined, alleviating the bottleneck issue. The neurons are uncomplicated computing units, the synapses are local memory units, and the communication channels (red line) connect numerous neurons and synapses. It should be noted that the practical purpose of neuromorphic systems is not to replace the von Neumann architecture completely, but to supplement the conventional architecture to make up its leeway, especially for intelligent tasks such as image recognition and natural language processing.

Memristor synapses

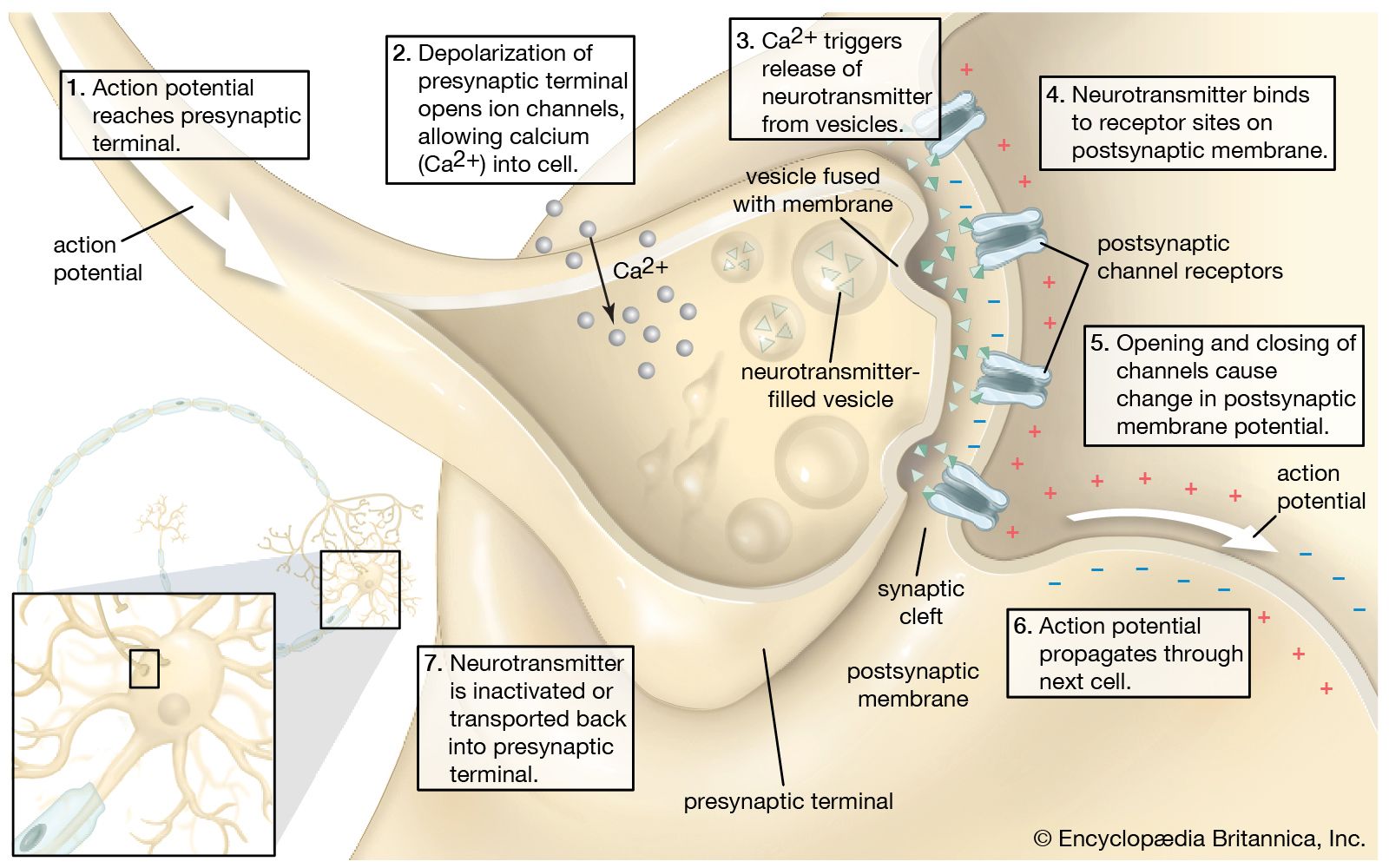

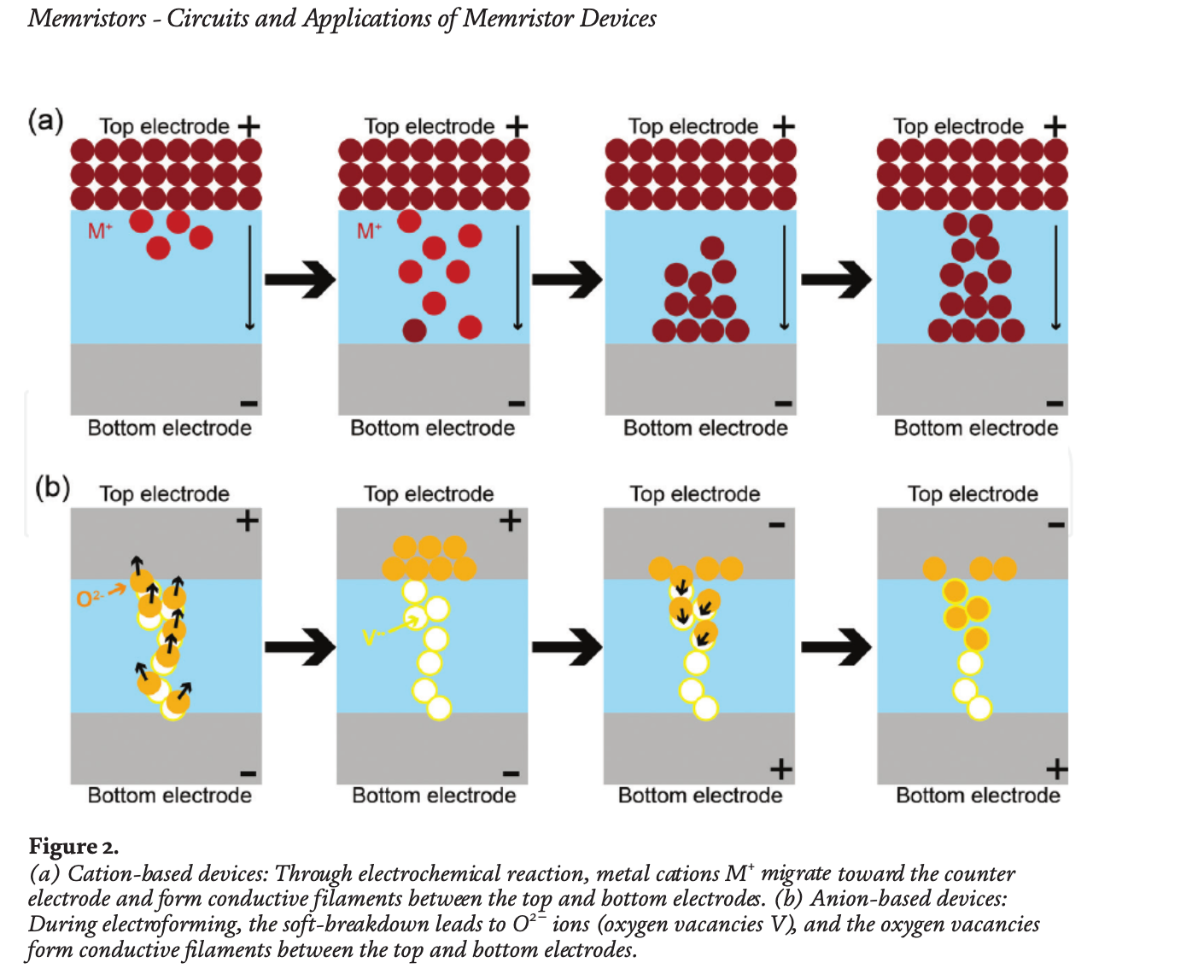

Memristors that consist of a storage layer inserted between the top and bottom electrodes can undergo dynamic reconfiguration within the storage layer with the application of electrical stimuli, resulting in resistance modulation referred to as memory effect. The changed resistance state can be retained even after electrical inputs are removed, and memristors are based on the history of applied electrical stimuli. These capabilities lead to analog switching, which resembles biological synapses where the strength (or synaptic weight) can increase or decrease depending on the applied action potential. When neuromorphic architecture is implemented on the conventional computing architecture, the synaptic weights are stored in the memory unit and are continuously read into the processor unit to transfer information to post-neurons. In other words, practically, the von Neumann bottleneck still remains challenged. However, in case of memristor synapse-based neuromorphic systems, the synapses can not only store a specific weight but also naturally transmit information into post-neurons, overcoming the von Neumann bottleneck and improving system efficiency. In addition to analog switching, memristors have exhibited desirable device properties, includ-ing nanoscale footprint, long endurance and retention, nanosecond switching speed, and low power consumption. Owing to these characteristics, memristors have emerged as promising candidates for artificial synapses.

Neuromorphic systems are one of the most promising candidates to deal with the von Neumann bottleneck caused by the memory wall between memory and process units. Using memristor synapses simply classified into cation- and anion-based devices can resolve this bottleneck owing to their storage and transmittance capabilities. To obtain higher performance of neuromorphic systems, representative characteristics, including the linearity of weight update, large multilevel states and dynamic range (ON/OFF ratio), variation and endurance, and retention need to be improved. In this context, different memristor synapses based on novel materials and device structures were introduced.

Source: researchgate.net